Part 2.3 of Apache Kafka for beginners - Sample code for Python! This tutorial contains step-by-step instructions that show how to set up a secure connection, how to publish to a topic, and how to consume from a topic in Apache Kafka.

The previous article explained basics in Apache Kafka. Topics, consumers, producers etc. In this part of the series we will show sample code for Python. If you are not familiar with Apache Kafka, we would highly recommend you to read Apache Kafka for beginners - what is Apache Kafka? before starting with this tutorial.

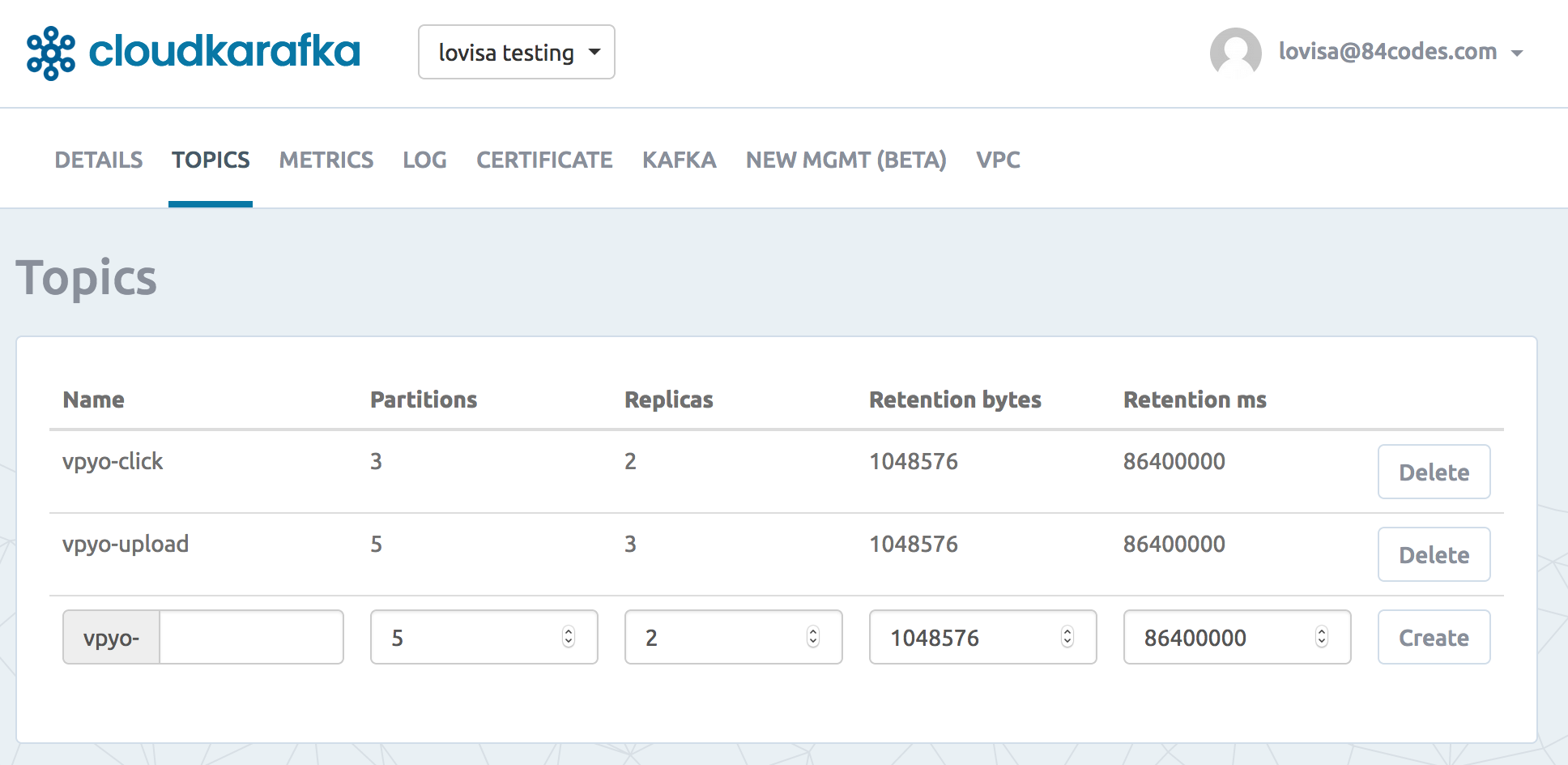

We follow a website activity tracking scenario - we pretend that we have a simple website where users can click around, sign in, write blog articles, upload images to articles and publish those articles. When an action is performed by the user (e.g someone logs in, when a user presses a button or when a user uploads an image to an article) a tracking event and information about the event will be placed into a message, and the message will be placed into a specified Kafka topic. We will have one topic named "click" and one named "upload". Topics can be created in the CloudKarafka console for your instance.

We will choose to setup partitioning based on the user's id. A user with id 0, will map to partition 0, and user with id 1 to partition 1 etc. Our "click" topic will be split up into three partitions (three users) on two different machines.

The consumer can subscribe from the topics and show monitoring usage in real-time. It can consume from the latest offset, or it can replay previously consumed messages by setting the offset to an earlier one.

Getting started with Apache Kafka and Python

You need an Apache Kafka instance to get started. A FREE Apache Kafka instance can be set up for test and development purpose in CloudKarafka, read about how to set up an instance here.

Once you have the Kafka instance up and running you can find the python code example on GitHub: https://github.com/CloudKarafka/python-kafka-example

The sample project contains everything you need to get starting with producing and consuming messages with Kafka.